Numbers tell you what happened. Qualitative data tells you why. that distinction matters more than most people realize. A customer satisfaction survey might show that 40% of users abandoned your onboarding flow. But only a qualitative method — a well-run interview, a contextual observation, a diary study will tell you what they were thinking, feeling, and experiencing when they left.

Yet most guides on qualitative data collection list the same four methods, define them in two sentences each, and call it a day. That’s not enough if you actually want to collect data that holds up under scrutiny and leads to decisions worth making.

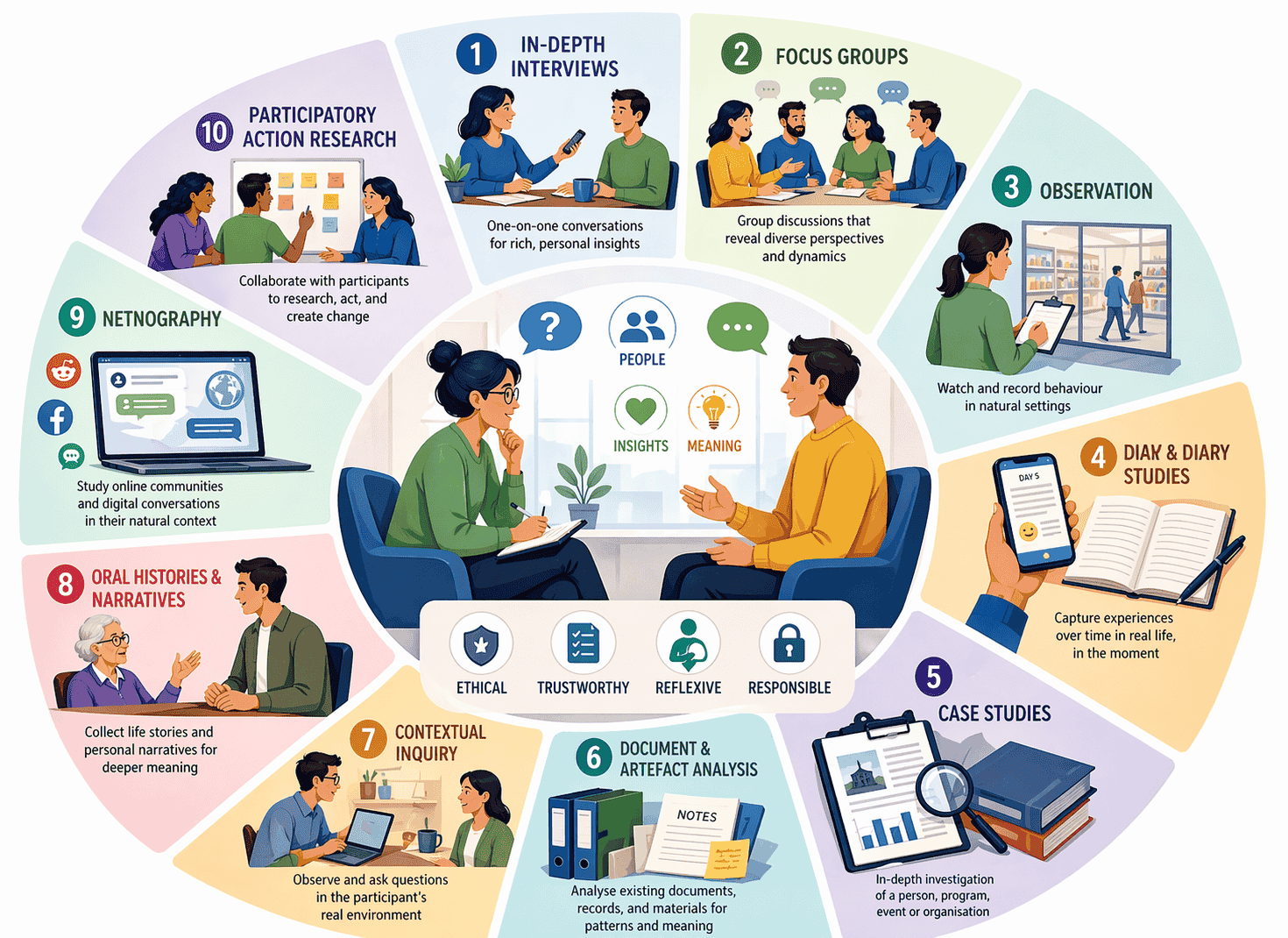

This guide covers all 10 qualitative data collection methods — including three emerging techniques most articles ignore — plus a practical framework for choosing the right one, the bias traps to avoid, and the ethics layer that now applies to every project.

What Makes Data “Qualitative”?

Qualitative data is non-numerical. It comes in the form of words, narratives, images, recordings, and observations. Rather than measuring how many or how much, qualitative methods explore how and why.

The goal isn’t statistical significance. It’s depth, context, and meaning.

That said, qualitative research isn’t soft or unscientific. When done well, it produces findings that are credible, transferable, and dependable — the qualitative equivalents of validity and reliability, which we’ll return to later in the trustworthiness section.

Most rigorous research designs use both qualitative and quantitative methods together. This approach, called triangulation, reduces the blind spots that any single method creates and produces a fuller picture of reality.

The 10 Qualitative Data Collection Methods

1. In-Depth Interviews

The interview is the backbone of qualitative research. It’s a one-on-one conversation — conducted in person, over video, or by phone — that gives participants space to describe their experiences, opinions, and reasoning in their own words.

Interviews can be structured (fixed questions, consistent order), semi-structured (a topic guide with room to explore), or unstructured (open conversation led by the participant). Semi-structured interviews are the most common because they balance consistency with flexibility.

When to use it: When you need rich, personal insights, When your research question is “why” or “how.” When the topic is sensitive and group settings would inhibit honest responses.

Real example: A healthcare provider redesigning a patient discharge process ran 20 semi-structured interviews with recently discharged patients. The surveys had shown low satisfaction scores — but the interviews revealed the real issue: patients felt rushed and didn’t understand their aftercare instructions. That finding drove a specific, targeted intervention that surveys never could have surfaced.

Watch out for: Interviewer bias. The way you phrase a question, react to an answer, or nod your head can subtly push respondents in a direction. Record sessions, use neutral language, and train interviewers consistently.

2. Focus Groups

A focus group brings 6 to 12 participants together to discuss a topic guided by a moderator. The value isn’t just in individual answers — it’s in the group dynamic. Participants react to each other, challenge each other’s views, and surface perspectives that might never emerge in a solo interview.

When to use it: Brand perception research, concept testing, advertising feedback, community opinion gathering and early-stage product research.

Real example: A food company testing three new product names ran focus groups across two cities. Participants didn’t just pick a favourite — they explained the emotional associations each name triggered. One name they’d internally loved was quietly killed when multiple groups associated it with a competing brand.

Watch out for: Groupthink. Dominant voices can drown out quieter participants. A skilled moderator actively draws out hesitant voices, uses techniques like written responses before group discussion, and follows up with individual probing questions.

3. Observation

Observational research involves watching and recording behaviour as it naturally occurs — without asking questions or interfering. The researcher becomes a witness, not a participant in the process.

It can be direct (the researcher is present in person) or indirect (reviewing recordings, session replays, or video footage). It can also be participant observation, where the researcher immerses themselves in the environment they’re studying.

When to use it: Usability research, retail analytics, workplace studies, educational research, and any setting where self-reported behaviour differs significantly from actual behaviour.

Real example: A supermarket chain wanted to understand why shoppers ignored a newly repositioned product display. Surveys said people noticed it. Observation revealed they didn’t — because the display was consistently blocked by shopping trolleys at peak hours. No survey would have caught that.

Watch out for: The observer effect. People sometimes behave differently when they know they’re being watched. Use unobtrusive observation methods where ethically possible, and always obtain informed consent.

4. Focus Group Journals and Diary Studies

Diary studies ask participants to record their thoughts, experiences, and behaviours over a period of time — days, weeks, or even months. Entries can be written, audio-recorded, video-logged, or captured via a mobile app.

This method is powerful precisely because it captures data in the moment, rather than relying on memory recall in a later interview. Lived experience tends to get compressed and simplified in retrospect. Diary studies prevent that.

When to use it: Longitudinal research, tracking behaviour change over time, UX research, patient journey studies, employee experience research.

Real example: A financial wellbeing app asked 30 users to keep a two-week audio diary about their daily money decisions. The entries revealed anxiety triggers that neither surveys nor interviews had captured — users felt fine discussing finances in the abstract but became visibly stressed when logging daily spending. That emotional data shaped the entire app redesign.

Watch out for: Participant fatigue. Long diary studies suffer drop-off. Keep entry prompts short, timely, and specific. Offer meaningful incentives and send regular reminders.

5. Case Studies

A case study is a deep, multi-source investigation of a single subject — a person, organisation, programme, event, or decision. It typically combines several data sources: interviews, documents, observations, and artefacts.

The power of a case study lies in its richness. It doesn’t just describe what happened — it builds a complete contextual understanding of why it happened and what conditions shaped it.

When to use it: Programme evaluation, organisational research, policy analysis, marketing storytelling, academic research where breadth of context matters more than breadth of sample.

Real example: A nonprofit evaluating a youth employment programme used a case study approach to document three participants’ journeys over 12 months. Combining interview transcripts, attendance records, mentor notes, and participant reflections, they produced findings that secured a major funding renewal — findings that aggregate statistics alone would never have communicated.

Watch out for: Case studies aren’t generalisable. One organisation’s experience doesn’t apply universally. Be transparent about scope and context in your reporting.

6. Document and Artefact Analysis

This method involves systematically examining existing texts, records, and materials — meeting minutes, policy documents, social media posts, photographs, historical archives, medical records, or organisational reports.

Researchers look for patterns, themes, language use, and meaning. The analytical framework applied is often thematic analysis — a structured process of identifying, coding, and interpreting recurring themes across a dataset.

When to use it: Historical research, policy analysis, media studies, content research, legal and compliance work, and as a supplementary source in almost any qualitative study.

Real example: A public health researcher studying vaccine hesitancy analysed three years of comments from parenting forums. Rather than asking people directly about their beliefs (which often produces socially desirable responses), the researcher examined naturally occurring conversations — and uncovered specific misinformation narratives that were influencing decision-making in ways that hadn’t appeared in any survey data.

Watch out for: Documents reflect the perspective and purpose of whoever created them. A company’s annual report and an employee’s diary tell very different stories about the same organisation. Always consider the source, context, and intent behind any document.

7. Contextual Inquiry

Contextual inquiry is a hybrid method that blends observation with interviewing. The researcher visits the participant in their natural environment — their home, workplace, or wherever the relevant activity takes place — and asks questions while observing them in action.

This method, rooted in UX and human-computer interaction research, produces uniquely grounded insights. You’re not asking someone to remember what they did — you’re watching them do it and asking questions in real time.

When to use it: Product design research, workplace studies, process improvement, software usability research, healthcare workflow analysis.

Real example: A software company designing a logistics tool sent researchers to spend two days with warehouse managers. Watching how managers actually used (and workarounds they’d built around) the existing system revealed seven pain points that no amount of remote interviews had surfaced — including a critical step the team hadn’t even known existed.

Watch out for: Access and logistics. Getting into people’s real environments takes more time and negotiation than a Zoom call. Clearly communicate what the session involves and get consent for any recording.

8. Oral Histories and Narratives

Narrative research collects and analyses the stories people tell about their own lives and experiences. Oral history interviews, life history interviews, and personal narrative methods all fall into this category.

The focus here isn’t just on what happened but on how people make sense of it — the meaning they construct, the identity they project, and the way they frame cause and effect. This makes it particularly valuable in fields where human experience is the subject itself.

When to use it: Community research, healthcare and social work, historical research, human rights documentation, organisational culture studies.

Real example: A community organisation documenting the experiences of elderly residents facing displacement used oral history interviews to capture first-person accounts. The narratives were later presented to city planners — and proved far more persuasive than demographic statistics in shaping the policy outcome.

Watch out for: Narrative research requires significant time to conduct, transcribe, and analyse. It also requires emotional intelligence and sensitivity, particularly when stories involve trauma or loss.

9. Netnography

Netnography is ethnographic research adapted for online communities and digital spaces. Developed by researcher Robert Kozinets in the 1990s, it involves the systematic, immersive observation and analysis of naturally occurring interactions in online environments — forums, social media groups, review platforms, Reddit threads, gaming communities, and more.

Unlike social media monitoring (which is largely automated and quantitative), netnography is qualitative. The researcher immerses themselves in the community, interprets context and culture, and builds meaning from what they observe.

Today, AI-assisted netnography uses natural language processing to help researchers identify emotional trends and thematic patterns across large volumes of online text — though the interpretive, human-led layer remains essential.

When to use it: Consumer behaviour research, brand community studies, public health research, market research on niche communities, cultural studies.

Real example: A skincare brand wanted to understand how consumers genuinely talked about skin concerns before buying. Rather than running focus groups (where social desirability shapes answers), the research team conducted a netnographic study of beauty subreddits and forums. The naturally occurring language participants used — unfiltered, unsolicited, and honest — directly shaped the brand’s messaging strategy.

Watch out for: Ethics. Observing online communities without disclosure raises significant questions about informed consent, especially when communities have a reasonable expectation of privacy. Always consult your institution’s ethical guidelines and err on the side of transparency.

10. Participatory Action Research (PAR)

PAR is a collaborative approach where the researcher and the researched work together. Rather than studying a community from the outside, the researcher partners with participants to define the research questions, collect data, interpret findings, and take action.

This method is particularly powerful in community development, social justice research, and public health work — any context where the people being studied have a stake in the outcome and expertise the researcher lacks.

When to use it: Community development, education research, public health programmes, organisational change initiatives, social policy evaluation.

Real example: A public school district wanting to reduce student dropout rates partnered with students themselves in a PAR project. Students co-designed surveys, conducted peer interviews, and helped interpret the data. Their involvement produced findings the external researchers never would have reached — and, crucially, created buy-in for the resulting changes.

Watch out for: Power dynamics. PAR requires the researcher to genuinely share control, which can be uncomfortable and logistically complex. It also takes longer than conventional research, and the iterative nature makes timelines hard to predict.

The Ethics & Legal Layer

Qualitative research deals directly with human beings — their experiences, beliefs, behaviours, and stories. That means ethics isn’t optional. It’s foundational.

Informed consent is the starting point. Participants must know what the study involves, how their data will be used, how long it will be retained, and that they can withdraw at any time — without penalty. This applies regardless of whether you’re running in-person interviews or analysing public forum posts.

Confidentiality and anonymisation must be built into your data management from day one. Qualitative data — verbatim quotes, described behaviours, personal narratives — can be surprisingly identifiable even without names attached.

GDPR and data protection laws apply fully to qualitative research. If you’re collecting data from individuals in the EU or UK, you need a lawful basis for collection, a clear retention policy, and secure storage. The same principle increasingly applies in the US, where over 20 states now have comprehensive privacy laws with comparable requirements.

Digital and online research raises additional ethical flags. Netnography and social media analysis occupy a grey zone — public data isn’t always ethically available data. Always ask: Would participants reasonably expect their words to be used in research? If there’s doubt, seek consent or find a different approach.

Avoiding Researcher Bias: The Reflexivity Imperative

Here’s something most qualitative research guides skip entirely: you are part of your data collection instrument.

Your assumptions, experiences, cultural background, and even your mood on interview day all influence what you ask, how you listen, and what you notice. This isn’t a flaw — it’s the nature of qualitative inquiry. But ignoring it undermines your findings.

Reflexivity is the practice of continuously examining how your own perspective shapes the research process. It means keeping a reflexivity journal, noting your assumptions before and after data collection, and interrogating your interpretations as you go.

Alongside reflexivity, watch for these specific bias types:

- Confirmation bias — unconsciously favouring data that supports what you already believe

- Selection bias — recruiting participants who are easy to reach rather than representative

- Response bias — participants telling you what they think you want to hear

- Observer effect — subjects behaving differently because they know they’re being watched

Addressing bias doesn’t mean eliminating subjectivity — that’s impossible in qualitative work. It means being transparent about it and using rigorous methods to manage it.

Ensuring Trustworthiness: The Four Criteria

In quantitative research, you talk about validity and reliability. In qualitative research, the parallel framework is trustworthiness — built on four criteria:

Credibility — Are your findings an accurate representation of participants’ experiences? Build this through prolonged engagement, triangulation across multiple methods or data sources, and member checking — sharing your interpretations back with participants to confirm accuracy.

Transferability — Can your findings apply in other contexts? You don’t claim universal generalisability in qualitative research, but you do provide enough rich contextual description (called thick description) that readers can judge whether findings apply to their situation.

Dependability — Could another researcher follow your process and reach consistent findings? Document your decisions, methods, and analytical steps in an audit trail.

Confirmability — Are your findings shaped by the data, not just your preconceptions? Demonstrate this through reflexivity practices and peer debriefing — having a colleague review your analysis for blind spots.

One concept that often gets skipped: saturation. This is the point in data collection when new interviews, observations, or focus groups stop producing new themes. Saturation signals that you’ve collected enough data. It’s one of the most important decisions in qualitative research — and one of the least discussed.

How to Choose the Right Qualitative Method

With ten methods in front of you, choosing the right one comes down to five questions:

1. What is your core research question? “Why” and “how” questions point toward interviews, contextual inquiry, and narrative methods. “What do people think?” points toward focus groups and netnography. “What do people do?” points toward observation and diary studies.

2. How sensitive is the topic? Sensitive topics (mental health, finances, discrimination) call for individual interviews rather than group methods. Anonymity and one-on-one settings produce more honest responses.

3. What is your timeline and budget? Interviews and focus groups are faster to set up. Ethnography, PAR, and diary studies require weeks or months. Netnography can be cost-effective but requires significant analytical expertise.

4. How much depth vs. breadth do you need? Deep, individual insight → interviews, case studies, oral histories. Broader cultural or community perspective → focus groups, netnography, observation.

5. Does the research require collaboration with participants? If participants have lived expertise that the researcher doesn’t, and if co-ownership of the findings matters, PAR is often the most ethical and effective choice.

| Research Goal | Best Method(s) |

|---|---|

| Understand user behaviour in context | Contextual Inquiry, Observation |

| Explore lived experience or personal stories | In-Depth Interviews, Narrative/Oral History |

| Gauge community or group opinion | Focus Groups, Netnography |

| Track behaviour or experience over time | Diary Studies |

| Investigate organisational or social phenomena | Case Studies, Document Analysis |

| Co-create solutions with a community | Participatory Action Research |

| Analyse online culture or brand communities | Netnography |

Common Mistakes to Avoid

Even experienced researchers fall into these traps:

- Starting without a clear research question. Qualitative data collection without a defined question produces mountains of rich data with no analytical direction.

- Under-recruiting. Reaching saturation typically requires more participants than researchers initially plan for. Build in flexibility.

- Skipping pilot testing. Run a pilot interview or focus group before your main data collection. You’ll almost always refine your questions or process as a result.

- Treating transcription as analysis. Transcribing interviews is not the same as analysing them. Thematic analysis, discourse analysis, and narrative analysis are distinct, structured processes.

- Neglecting context in reporting. Qualitative findings stripped of context lose their meaning. Always present data with the situational detail that makes it interpretable.

Final Thoughts

Qualitative data collection is both an art and a discipline. The art lies in building trust with participants, asking questions that open rather than close conversations, and staying curious when data surprises you. The discipline lies in rigour — choosing the right method, managing bias, ensuring trustworthiness, and respecting the ethical obligations that come with studying human experience.

The methods in this guide aren’t interchangeable. Each one is built for a particular kind of question, a particular kind of participant, and a particular kind of insight. Choose carefully, plan thoroughly, and always keep the human at the centre of the process.

Because that’s what qualitative research is ultimately about — not data points, but people.

This article is part of a topical cluster on data collection methods. For a complete overview of all methods — quantitative, qualitative, and emerging — see our guide: [15 Data Collection Methods Explained (With How to Choose the Right One)]. For the qualitative counterpart to this article, see: [Quantitative Data Collection Methods: 9 Techniques, Real Examples & When to Use Each.]