AI transformation is a problem of governance because organizations struggle more with managing accountability, risk, data, and decision-making than with the AI technology itself. Companies across industries are investing heavily in automation, machine learning, and generative systems. Yet despite this rapid adoption, many organizations are quietly struggling to turn AI into real business value.

The reason isn’t a lack of technology, It’s governance.

AI transformation is often framed as a technical challenge, but in reality, it’s a governance problem—one that involves leadership, accountability, risk management, and decision-making. If governance is weak, even the most advanced AI systems fail to deliver.

This guide explains what that means, why it matters, and how organizations can fix it.

What Is AI Transformation?

AI transformation goes beyond simply using AI tools. It refers to embedding AI into the core of business operations, strategy, and decision-making.

AI Adoption vs AI Transformation

Many companies confuse adoption with transformation.

- AI adoption: Using tools like chatbots, analytics, or automation in isolated areas

- AI transformation: Integrating AI across departments to drive long-term impact

Adoption is easy. Transformation is complex—and that’s where governance becomes critical.

Real-World Examples

- A healthcare provider using AI for diagnostics across multiple departments

- A financial firm automating risk assessment and fraud detection

- A marketing team using AI to personalize campaigns at scale

These examples require coordination, policies, and oversight—not just tools.

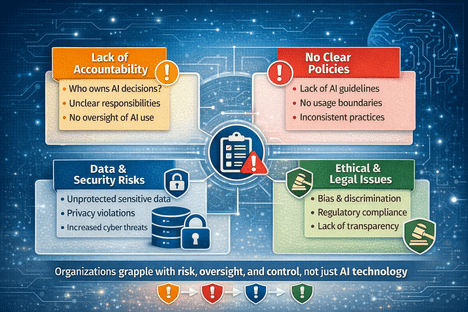

Why AI Transformation Is a Governance Problem

The core issue is simple: AI systems make decisions, and every decision needs accountability.

Without governance, organizations lose control over how AI is built, deployed, and used.

Lack of Clear Ownership

In many companies, no one truly “owns” AI.

- IT teams manage infrastructure

- Data teams handle models

- Business teams use the outputs

This creates confusion. When something goes wrong, responsibility is unclear.

Absence of Policies and Frameworks

AI often gets deployed faster than policies are created.

Without clear guidelines:

- Teams use inconsistent data

- Models behave unpredictably

- Ethical boundaries are ignored

Data Governance Challenges

AI depends on data—often sensitive, dynamic, and complex.

Poor data governance leads to:

- Inaccurate predictions

- Privacy violations

- Compliance risks

Ethical and Regulatory Risks

AI introduces serious ethical concerns:

- Bias in decision-making

- Lack of transparency

- Unfair outcomes

Regulations are evolving, and organizations must stay compliant or face legal consequences.

Scaling Without Control

AI works well in pilot projects. But when companies try to scale, problems emerge:

- Models drift over time

- Systems become harder to monitor

- Risks multiply

Without governance, scaling AI becomes chaotic.

Key Governance Challenges in AI Transformation

Understanding the challenges helps explain why so many AI initiatives fail.

Who Owns AI Decisions?

Ownership is one of the biggest gaps.

IT vs Business Conflict

IT teams focus on systems and security.

Business teams focus on outcomes and speed.

Without alignment, decisions become fragmented.

Leadership Gaps

Many executives support AI in theory but lack a clear governance strategy. This creates a disconnect between vision and execution.

Managing Risk and Bias

AI systems are not neutral. They reflect the data they’re trained on.

Algorithmic Bias

Bias can lead to unfair decisions in hiring, lending, or healthcare. This is not just a technical issue—it’s a governance failure.

Compliance Requirements

Laws around data protection and AI usage are increasing. Organizations must ensure their AI systems meet legal standards.

Data Privacy and Security

AI systems often process large volumes of personal data.

Without strong governance:

- Data leaks become more likely

- Unauthorized access increases

- Trust is damaged

Lack of Standardization

Many organizations build AI systems in silos.

This leads to:

- Inconsistent practices

- Duplicate efforts

- Difficult scaling

Governance provides the structure needed to standardize processes.

The Impact of Poor AI Governance

When governance is weak, the consequences are serious.

Failed AI Projects

Many AI initiatives never move beyond the pilot stage. Others fail to deliver ROI due to poor oversight.

Financial Losses

AI investments are expensive. Without governance, companies waste resources on ineffective systems.

Legal and Compliance Risks

Regulatory violations can result in fines and reputational damage.

Loss of Trust

Customers and stakeholders expect fairness and transparency. Poor governance erodes trust quickly.

What Is AI Governance?

AI governance refers to the framework of policies, processes, and controls that guide how AI is developed and used.

Core Components of AI Governance

Policies and Guidelines

Clear rules define:

- How AI can be used

- What data is allowed

- Ethical boundaries

Monitoring and Auditing

AI systems must be continuously monitored to ensure they behave as expected.

Risk Management

Organizations need systems to identify, assess, and mitigate risks related to AI.

How to Fix the Governance Problem in AI Transformation

Solving this problem requires a structured approach.

Define Clear Ownership

Assign responsibility for AI initiatives.

- Create dedicated AI leadership roles

- Ensure accountability across teams

Build a Governance Framework

Develop a formal structure that includes:

- Policies

- Standards

- Approval processes

This ensures consistency across the organization.

Implement Ethical Guidelines

Ethics should be built into every stage of AI development.

- Identify potential biases

- Ensure transparency

- Promote fairness

Strengthen Data Governance

High-quality data is essential.

- Standardize data collection

- Ensure accuracy and security

- Control access

Monitor and Improve Continuously

AI systems evolve over time.

- Track performance

- Audit outcomes

- Update models regularly

Governance is not a one-time effort—it’s ongoing.

Best Practices for AI Governance

Organizations that succeed with AI follow a few key principles:

Align AI With Business Goals

AI should support clear objectives, not exist as a standalone initiative.

Create Cross-Functional Teams

Bring together IT, data, legal, and business teams to ensure balanced decision-making.

Invest in Governance Tools

Use platforms that support monitoring, compliance, and risk management.

Stay Updated on Regulations

AI laws are evolving. Staying informed helps avoid legal issues.

Common Mistakes to Avoid

Many organizations repeat the same errors:

Focusing Only on Technology

AI tools are important, but governance determines success.

Ignoring Governance Early

Waiting too long to establish governance leads to bigger problems later.

Lack of Skilled Leadership

Without experienced leaders, AI initiatives lack direction.

Poor Data Management

Bad data leads to bad outcomes—no matter how advanced the AI is.

Conclusion: Governance Is the Foundation of AI Success

AI transformation is not just about innovation—it’s about control.

Organizations that treat AI as a purely technical challenge often fail. Those that prioritize governance build systems that are scalable, ethical, and reliable.

The takeaway is clear:

AI transformation succeeds when governance leads the way.

If companies want to unlock the full potential of AI, they must focus less on tools—and more on how those tools are managed.